Empathic AuRea: Exploring the Effects of an Augmented Reality Cue for Emotional Sharing Across Three Face-to-Face Tasks

Abstract

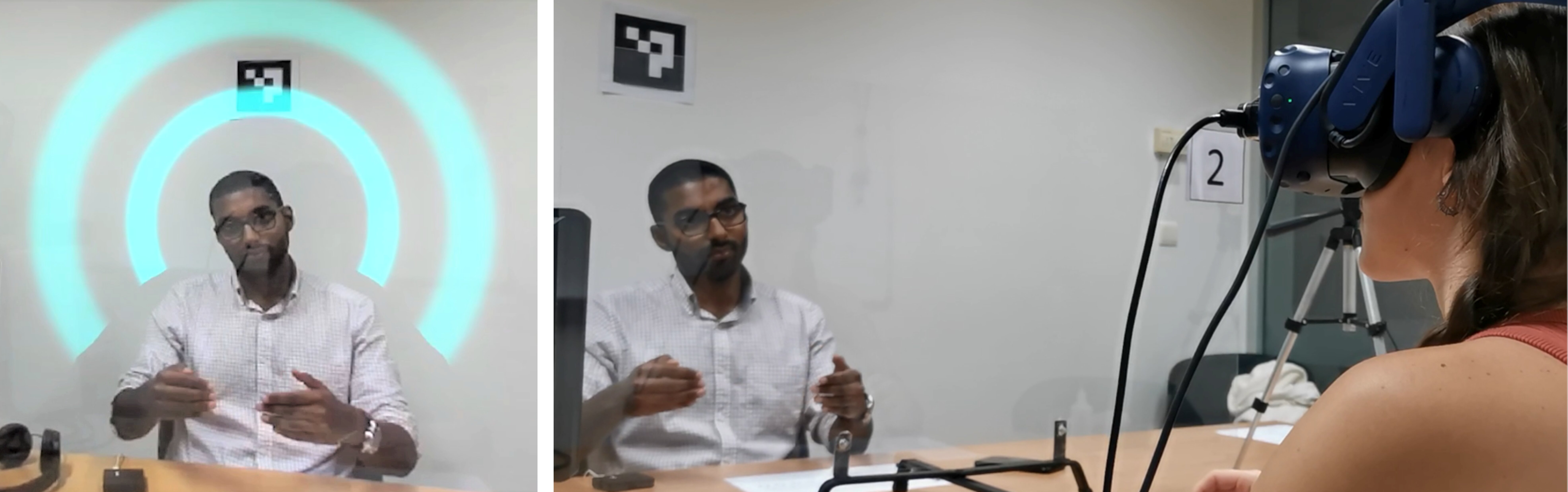

The Empathy-Effective Communication hypothesis states the better a speaker can understand their listener's emotions, the better can they transmit information; and the better a listener can understand the speaker's emotions, the better can they apprehend the information. Previous emotional sharing systems have managed to create a space of emotional understanding between collaborators on remote locations using bio-sensing, but how a context of face-to-face communication can benefit from biofeedback is still to be studied. This study introduces a new Augmented Reality communication cue from an emotion recognition neural network model, trained using electrocardiogram physiological data (AuRea). The proposed design is meant to facilitate emotional state understanding, increasing cognitive empathy without compromising the existing verbal, non-verbal, and paraverbal communication cues. We conducted a study where pairs of participants (N=12) engaged in three tasks where AuRea was found to positively affect performance and emotional understanding, but negatively affect memorization.

Video

Takeaways

Emotional cues help, but only for the right tasks

Showing a partner's emotional state through AR improved performance in a collaborative instruction task (tangram assembly) but hurt performance in a memory-heavy storytelling task. The cue helped when the person giving instructions could adapt in real time to their partner's reactions. It became a distraction when the task demanded focused retention.

Match emotional sharing features to tasks that benefit from dynamic adaptation, not tasks requiring sustained concentration.

Emotional sharing is socially asymmetric

The person viewing the emotional cue reported feeling more connected to their partner. The person being sensed reported feeling less connected and more worried, especially with strangers. The system gave one side new insight while making the other feel exposed.

Physiological disclosure systems cannot be evaluated by performance alone. Comfort, consent, and reciprocity are first-class design concerns, not afterthoughts.

Form factor costs are real and separable from the cue itself

Participants found the AR headset physically demanding, reporting eyestrain, motion sickness, and a narrow 720p video see-through view. This inflated workload scores and obscured the decoder's own face, removing natural communication cues like gaze. The emotional cue may have been more effective than the results suggest, masked by hardware constraints.

Future work on empathic AR should decouple display form factor effects from the biofeedback signal itself, ideally using optical see-through displays.

Citation

APA

Valente, A., Lopes, D. S., Nunes, N., & Esteves, A. (2022). Empathic AuRea: Exploring the effects of an augmented reality cue for emotional sharing across three face-to-face tasks. In 2022 IEEE Conference on Virtual Reality and 3D User Interfaces (VR) (pp. 158-166). IEEE. https://doi.org/10.1109/VR51125.2022.00034

BibTeX

@inproceedings{valente2022empathic,

title = {Empathic {AuRea}: Exploring the Effects of an Augmented Reality Cue for Emotional Sharing Across Three Face-to-Face Tasks},

author = {Valente, A. and Lopes, D. S. and Nunes, N. and Esteves, A.},

booktitle = {2022 IEEE Conference on Virtual Reality and 3D User Interfaces (VR)},

pages = {158--166},

year = {2022},

month = {mar},

day = {12--16},

doi = {10.1109/VR51125.2022.00034},

url = {https://doi.org/10.1109/VR51125.2022.00034},

issn = {2642-5254},

publisher = {IEEE}

}